How AI Learned to Talk for Hours (Plus: Why Everyone's Obsessing Over Nano Banana): Tokenizer Edition #3

This week's most valuable AI resources

Hey there! Remember when we thought 30-second voice clips were impressive? Microsoft just casually dropped a model that does 90-minute conversations with multiple speakers. Meanwhile, a 14B parameter model is out here embarrassing 671B models at math problems. Either we've hit some kind of efficiency singularity, or everyone's been doing this wrong the whole time.

New here?

The Tokenizer is my resource-focused newsletter edition where I curate the best papers, videos, articles, tools, and learning resources from across the AI landscape. Consider it your weekly dose of everything you need to stay ahead in machine learning.

TL;DR

What caught my attention this week:

• 📄 Papers: Long-form speech synthesis that actually works, agentic reasoning models that punch above their weight class, and production-scale training frameworks

• 🎥 Videos: Practical agent building without frameworks, advanced context engineering techniques, and rapid prototyping workflows

• 📰 Reads: The shift from RAG to context engineering, implications of mass AI deployment, and browser security for AI agents

• 🛠 Tools: Claude Code configuration templates that eliminate setup headaches, plus interactive transformer visualizations that make complex concepts accessible

• 🎓 Learning: Behind-the-scenes look at Google's viral Nano Banana image model that's dominating AI rankings

📄 5 Papers

VibeVoice Technical Report

https://arxiv.org/abs/2508.19205 | GitHub

Forget 30-second voice clips. VibeVoice generates 90-minute podcasts with natural conversation between up to 4 speakers, complete with realistic turn-taking and speaker consistency. The breakthrough is a speech tokenizer that compresses audio 80x better than existing models while preserving quality. Instead of stitching clips together, it actually understands dialogue flow and generates conversations that sound human. If you've been waiting for AI that can handle long-form audio without falling apart, this is it.

rStar2-Agent: Agentic Reasoning Technical Report

https://arxiv.org/abs/2508.20722 | GitHub

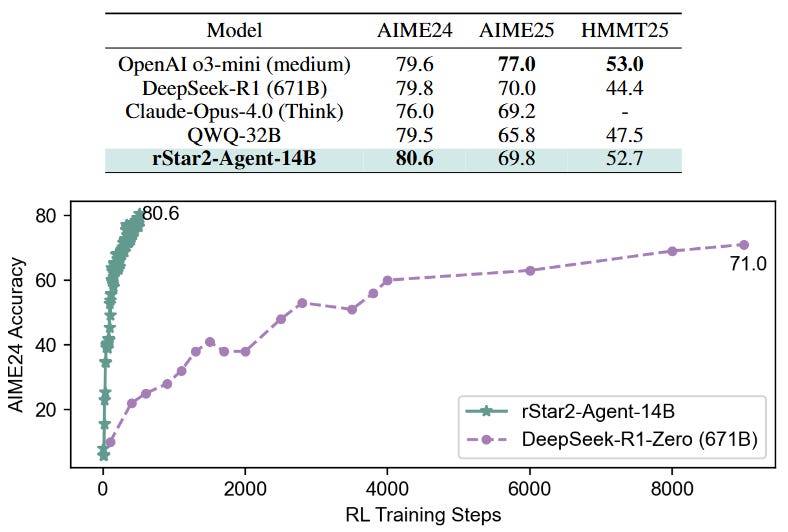

A 14B model just outperformed DeepSeek-R1's 671B parameters on math reasoning. rStar2-Agent hits 80.6% on AIME24 and 69.8% on AIME25 by learning to use Python tools strategically rather than just generating longer reasoning chains. The model reflects on code execution feedback, refines its approach, and gets better results with shorter responses. The training uses a novel RL algorithm that handles coding environment noise by keeping successful trajectories while learning from failures.

AWorld: Orchestrating the Training Recipe for Agentic AI

https://arxiv.org/abs/2508.20404 | GitHub

Training AI agents is painfully slow because they need thousands of environment interactions to learn anything useful. AWorld solves this by running agent training across clusters, making it 14.6x faster than single-machine approaches. They used this speedup to train a model that jumped from 21.59% to 32.23% accuracy on GAIA, a benchmark so hard that most models fail badly. The open-source system gives you the complete pipeline for scalable agent training.

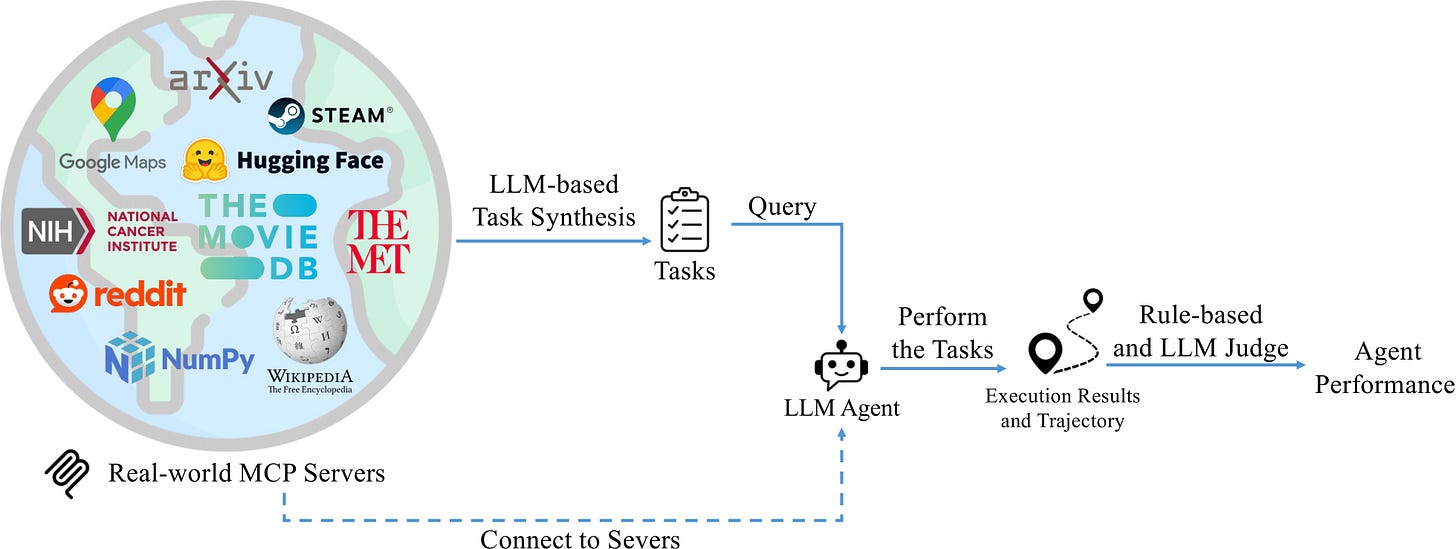

MCP-Bench: Benchmarking Tool-Using LLM Agents with Complex Real-World Tasks via MCP Servers

https://arxiv.org/abs/2508.20453 | GitHub

Most AI agent benchmarks test toy scenarios with explicitly named tools. MCP-Bench connects agents to 28 live servers with 250 real tools across finance, science, and other domains, then asks them to figure out multi-step workflows from vague instructions. Think "analyze this company's financials and compare to competitors" rather than "use the get_stock_price tool." It reveals why agents that ace simple benchmarks still struggle with actual work.

VoxHammer: Training-Free Precise and Coherent 3D Editing in Native 3D Space

https://arxiv.org/abs/2508.19247 | GitHub

Editing 3D models usually means the unchanged parts get corrupted, or the whole thing looks weird. VoxHammer fixes this by working directly in 3D space instead of rendering multiple views and stitching them back together. It preserves the exact regions you don't want to change while integrating your edits cleanly. No training is required. Just point it at a 3D model and edit it away. Useful if you're working with 3D content and tired of tools that mess up everything you didn't touch.

🎥 4 Videos

Building AI Agents in Pure Python

Skip the frameworks and learn to build agents with direct API calls. Dave Ebbelaar walks you through structured output with Pydantic, tool integration via function calls, and workflow patterns like routing and chaining in this 45-minute tutorial. You'll build a working calendar agent and understand deployment considerations. Good if you want to understand what's actually happening under the hood instead of relying on black-box libraries.

Advanced Context Engineering for Agents

Context engineering beats prompt engineering for production AI systems. Dexter Horthy, founder of Human Layer, shows you how to systematically provide AI with the right information at the right time, manage memory across long conversations, and build systems that don't break when contexts get complex. If your AI agents work in demos but fail in real applications, this explains why and how to fix it.

How do AI images and videos actually work

Ever wondered how DALL-E or Sora actually generate images and videos? This breakdown explains diffusion processes, attention mechanisms, and temporal consistency without drowning you in math. You'll understand why some prompts work better than others and what's actually happening when you hit "generate."

We prototyped 5 features in 84 mins

Watch a real-time coding session where Aakash Gupta and Colin Matthews build 5 AI-powered features in 84 minutes using modern tools like Bolt, Replit, and Cursor. No perfect takes. Just raw footage of rapid prototyping with AI assistance. Good for seeing how fast development actually works when you know the right tools and workflows.

📰 3 Curated Reads

"RAG is Dead, Context Engineering is King" — with Jeff Huber of Chroma

Chroma's co-founder argues that the industry is shifting from retrieval-augmented generation to more sophisticated context engineering approaches. The discussion covers how to systematically design context for AI systems, moving beyond simple document retrieval to understanding when and how to provide relevant information. Huber provides frameworks for context management that work reliably in production, addressing the limitations of traditional RAG architectures.

Mass Intelligence

Ethan Mollick examines what happens when AI capabilities become widely accessible, exploring the societal implications of "mass intelligence" where cognitive capabilities are no longer scarce resources. The piece analyzes how widespread AI adoption might reshape work, education, and creative industries, providing frameworks for understanding and adapting to this transition. Essential reading for anyone thinking about AI's broader impact beyond technical capabilities.

Agentic Browser Security

https://brave.com/blog/comet-prompt-injection/

Brave's security team details vulnerabilities in AI agents that interact with web browsers, specifically focusing on prompt injection attacks through manipulated web content. The post reveals how malicious actors can hijack agentic systems by embedding instructions in websites, demonstrating real attack vectors and proposing defensive measures. Critical reading as AI agents gain browser access and interact with untrusted web content.

🛠 2 Tools & Repos

Claude Code Templates

This CLI tool eliminates Claude Code setup complexity by providing ready-to-use configurations for React, Vue, Angular, Django, FastAPI, Rails, and more frameworks, each including optimized CLAUDE.md configurations and best practices. Instead of manually researching configurations for each project, you get instant access to 100+ agents, commands, settings, and MCPs. The platform includes analytics dashboards for monitoring Claude Code usage and comprehensive diagnostics to ensure optimal performance.

Transformer Explainer

https://poloclub.github.io/transformer-explainer/

This interactive visualization runs a live GPT-2 model directly in your browser, allowing you to experiment with custom text and observe in real-time how internal transformer components predict the next tokens. The tool uses Sankey diagrams to show information flow through the model, letting you drill down from high-level architecture to mathematical operations. You can adjust temperature parameters and see immediate effects on probability distributions, making abstract transformer concepts tangible and explorable.

🎓 1 Pick of the Week

Behind the scenes with Nano Banana

Google quietly tested its image generation model under the code name "Nano Banana" before revealing it as Gemini 2.5 Flash Image. This video explores the model that topped LMArena rankings and is now integrated into Gemini. The key breakthrough is character consistency, where you can edit the same person across multiple prompts without their face changing. Worth watching to understand what's driving the current buzz around Google's image capabilities.

Thanks for reading The Tokenizer! If you found something useful here, share it with someone who might benefit. And if you want more curated insights like this, consider subscribing to Gradient Ascent.

love this newsletter format, keep going Sairam!

Wow packed with tons of good info!